|

BackSelf Organized Polymer (SOP) model implemented on GPU (SOP-GPU)Why SOP model?SOP is a structure-based coarse-grained model of a polypeptide chain, which described the time-evolution of the chain by following the Langevin dynamics in three dimensions. The model uses a simple and computationally light yet accurate force field in terms of describing the macroscopic physical properties of a biomolecular system. Previous studies have shown that the SOP model describes well the mechanical properties of proteins, including the Green Fluorescent Protein [1], the tubulin dimer [2] and kinesin [3]. The SOP-model has also been employed to explore the kinetics and to map the free energy landscape of various biomolecules such as the tetrahymena ribozyme [4], riboswitch aptamers [5], GroEL [6], DHFR [7] protein kinase A [8] and myosin V [9]. In the SOP model, each amino acid residue of a chain is representated by just one Cα-particle. In addition, Langevin simulations are accelerated on a GPU ~10-200-fold, depending on size of the system in question. A combination of these factors enables one to follow the system dynamics on a very long centisecond to second timescale. This is a range of experimental timescales in the Atomic Force Microscopy (AFM) and optical tweezer-based dynamic force measurements on single proteins, multimeric proteins and protein tandems, protein-protein complexes and aggregates, protein fibers, and protein assemblies. Currently, it remains virtually impossible to reach the biologically relevant millisecond to second timescales even for a very small system of a few tens of residues using conventional all-atom Molecular Dynamics (MD) methods in explicit and implicit solvent (water) implemented on the most powerful distributed computer clusters. In the context of dynamic force measurements in silico, force-loading conditions used in all-atom MD simulation approaches (the force-loading rates and cantilever tip geometry), are different from those employed in the experimental dynamic force microscopy. In this regard, the SOP-GPU model incorporated into the SOP-GPU package is the only known approach, which enables researchers to explore the mechano-chemical properties of biological systems

This is farely important, rendering the fact that the kinetics (pathways, intermediate states), thermodynamics (transition barriers and working distances), and mechanisms all change with the rate rf of a force load fext=rft (force-ramp), and with the force level fext=const (force-clamp). When the X-ray structure of a biological system is known (from Protein Data Bank or other depositories), the SOP-GPU model enables researchers to explore

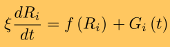

This can be readily achieved in reasonable wall-clock time using the SOP-GPU package. Hence, the results of single molecule experiments and simulations can be compared directly, which helps researchers provide meaningful interpretation of the results of experimental force measurements, i.e. dynamic signatures in the force spectra and force-indentation curves. SOP modelIn the SOP model [1,4,10], each residue is described using a single interaction center (Cα-atom). The dynamics of the i-th Cα-particle are obtained by following the Langevin equations in the overdamped limit:

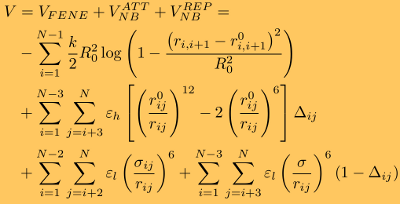

where ξ is the friction coefficient, and f(Ri)=−∂V/∂Ri is the molecular force exerted on the i-th particle due to the potential energy V. Here, Gi(t) is the Gaussian distributed random force with zero mean and delta function correlations (white noise). The random force takes into consideration the stochastic collisions of protein residues with the solvent (water) molecules. The potential energy function of a protein conformation V (force field), specified in terms of the coordinates of the Cα-particles, {R} = R1, R2, ... Rn , is given by

where the distance between any two interacting residues i and i+1 is ri,i+1, whereas r0i,i+1 is its value in the native (PDB) structure. The first term in the equation is the finite extensible nonlinear elastic (FENE) potential, which describes the backbone chain connectivity. Here, R0=2 Å is the tolerance in the change of a covalent bond, and k=1.4 N/m is the force constant. The second term in the equation is the Lennard-Jones potential (VNBATT), which accounts for the native interactions that stabilize the (native) folded state. In the SOP model, it is assumed that when the non-covalently linked residues i and j (|i - j| > 2) are within the cutoff distance RC in the native state, i.e., rij < RC, then Δij=1, and zero otherwise. The value of εh specifies the strength of the nonbonded interactions. The typical values of εh are in the 1.0-1.5 kcal/mol range, depending on the secondary structure element in question. All non-native interactions (fourth term in the above equation) are treated as repulsive (VNBREP). Additional constraint are imposed on the bond angle formed by residues i, i+1, and i+2 by including the repulsive potential with parameters εl=1 kcal/mol and σ=3.8 Å, which quantify, respectively, the strength and the range of repulsion. To ensure self-avoidance of the protein chain, the σ value is set to σ=3.8 Å. Simulation methods

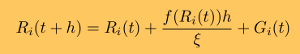

Because GPUs differ from CPUs in several fundamental ways, the CPU-based methods for biomolecular simulations cannot be easily translated nor simply adapted to a GPU. In molecular simulations, the particle-particle interactions are described by the same empirical potential energy function (force field), and the system dynamics is obtained by solving numerically the same equations of motion for all particles. For the SOP model, we have developed the numerical procedures for generation of Verlet lists and random forces, for the computation of forces due to covalent and non-covalent interactions, and for the numerical integration of Langevin equations of motion [10]. A detailed description of the numerical algorithms can be found in our recent paper here.

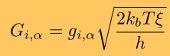

where h is the integration timestep. Benchmark tests of the accuracy of numerical integration, which assess the magnitude of numerical error accumulated over many billions of iterations, show that in long simulations on a GPU device single precision arithmetic and the Ermak-McCammon algorithm can be used to describe accurately the mechanical properties of biomolecules. Langevin simulations require a reliable source of normally distributed (pseudo)-random numbers, gi,α (α = x, y, and z, and = 1,2,...,n), to compute the three components of the Gaussian random force

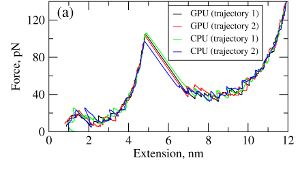

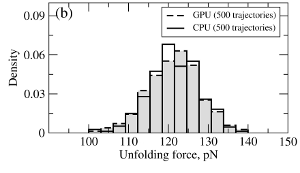

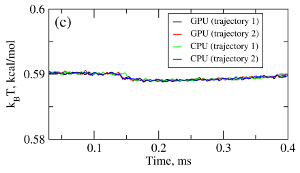

where T is the absolute temperature (kb is the Boltzmann's constant). A (pseudo)-random number generator (RNG) produces a sequence of random numbers ui,α,a uniformly distributed in the unit interval [0,1], which is then translated into a sequence of normally distributed random numbers with zero mean and unit variance (gi,α) using variety of methods. In the SOP-GPU package we utilize the well-known Box-Mueller transformation. A detailed description of the numerical algorithms for the implementation of random number generators (RNGs) on the GPU device is given here. Benchmark simulationsWe have tested the performance of the SOP-GPU package (written in CUDA - a dialect of C and C++ programming languages) on a NVIDIA GPU Tesla C1060 (MIPT), and have compared the results against the performance of the optimized C code (SOP program) on a dual Quad Core Xeon 2.83 GHz of a similar level of technology. We have analyzed the results of CPU- and GPU-based computations by comparing the force spectra, i.e. f versus X force-extension profiles, the distributions of unfolding forces (peak forces in the force spectra), and the average temperature ⟨T⟩, for the all-β sheet WW-domain (see Figure 1). Aside from small deviations due to the different initial conditions, the profiles of f(X) and ⟨T⟩, and the unfolding force histograms, obtained on the CPU and on the GPU, agree very well.

Figure 1. Comparison of the results of pulling simulations for the WW-domain obtained on a GPU and on a CPU (pulling speed vf=2.5μm/s). Panel (a): Representative examples of the force spectrum (force-extension curves). Panel (b): The histograms of unfolding forces. Panel (c): The average temperature of the system as a function of time ⟨T(t)⟩.

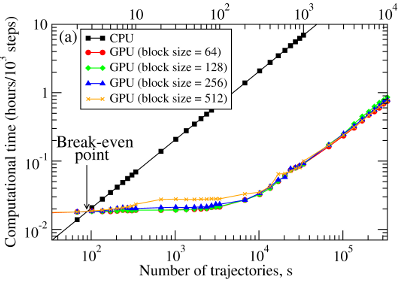

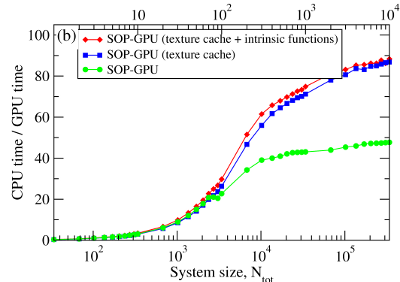

We have compared the overall performance of an end-to-end application of the SOP-GPU program with the heavily tuned CPU-based implementation (SOP program) in describing the Langevin dynamics of the WW domain at equilibrium. We profiled the computational performance of the SOP-GPU program as a function of the number of independent trajectories s running concurrently on the GPU (many-runs-per-GPU approach). While the single CPU core generates one trajectory at a time, the GPU device is capable of running many trajectories at the same time. The results (see Figure 2a) show that, for the WW domain (N = 34), the GPU accelerates computations starting from 3 independent runs, which is equivalent to a single run for a system of N≈102 residues (one-run-per-GPU approach). This is the so-called break-even point. While the simulation time on the CPU scales linearly with s (or with N), the scaling on the GPU in this regime is sublinear (nearly constant) up to N≈104 (s≈300 for the WW domain). At this point, the GPU shows significant performance gains relative to the CPU reaching the maximum 80–90-fold speedup (see Figure 2b). The amount of GPU on-board memory, i.e. ~4 GB (Tesla C1060), is sufficient to describe long Langevin dynamics for large biomolecular systems of ~104 residues, i.e., comparable in size with the fibrinogen dimer (Fb)2.

Figure 2. Panel (a): The log-log plot of the computational time per 1,000 steps of the simulations on a CPU and on a GPU versus the system size, N (one-run-per-GPU approach), and versus the number of independent trajectories running concurrently on a GPU s (many-runs-per-GPU approach), for the all-β-strand WW domain. The GPU performance is tested for the thread blocks of size B = 64, 128, 256, and 512. Panel (b): The log-linear plot of the relative CPU/GPU performance (computational speedup) as a function of N and s. The performance is compared for the SOP-GPU program, and when it is accelerated by using texture cache, and texture cache plus intrinsic mathematical functions.

References

| ||||||